Introduction

Network pruning reduces the computation costs of an over-parameterized network without performance damage. Prevailing pruning algorithms pre-define the width and depth of the pruned networks, and then transfer parameters from the unpruned network to pruned networks. To break the structure limitation of the pruned networks, we propose to apply neural architecture search to search directly for a network with flexible channel and layer sizes. The number of the channels/layers is learned by minimizing the loss of the pruned networks. The feature map of the pruned network is an aggregation of K feature map fragments (generated by K networks of different sizes), which are sampled based on the probability distribution.The loss can be back-propagated not only to the network weights, but also to the parameterized distribution to explicitly tune the size of the channels/layers. Specifically, we apply channel-wise interpolation to keep the feature map with different channel sizes aligned in the aggregation procedure. The maximum probability for the size in each distribution serves as the width and depth of the pruned network, whose parameters are learned by knowledge transfer, e.g., knowledge distillation, from the original networks. Experiments on CIFAR-10, CIFAR-100 and ImageNet demonstrate the effectiveness of our new perspective of network pruning compared to traditional network pruning algorithms. Various searching and knowledge transfer approaches are conducted to show the effectiveness of the two components.

Figure 1.. Searching for the width of a pruned CNN from an unpruned three-layer CNN.

Results

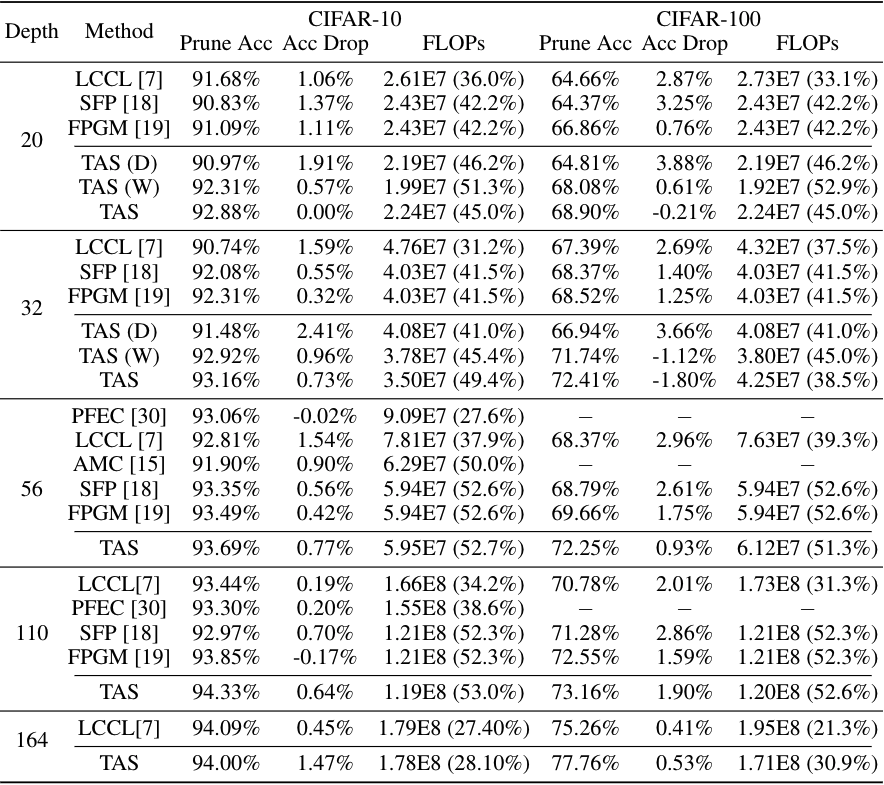

Figure 2. Comparison of different pruning algorithms for ResNet on CIFAR. ``Acc'' = accuracy, ``FLOPs'' = FLOPs (pruning ratio), ``TAS (D)'' = searching for depth, ``TAS (W)'' = searching for width, ``TAS'' = searching for both width and depth.

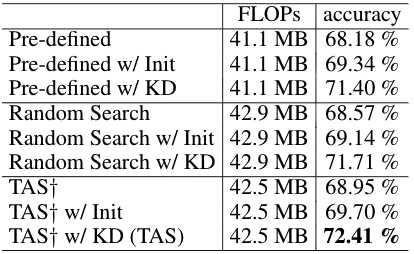

Figure 3. Comparison of different knowledge transfer strategies on (1) hand-crafted designed architecture, (2) random searched architecture, and (3) TAS searched architecture. We report the accuracy on CIFAR-100 when pruning about 40\% FLOPs of ResNet-32.

Video 1. The searched architecture after each trarining epoch. We show the number of channels (x-axis) for each layer (y-axis). (Use Chrome to play the video.)

Citation

@inproceedings{dong2019tas,

title = {Network Pruning via Transformable Architecture Search},

author = {Dong, Xuanyi and Yang, Yi},

booktitle = {Neural Information Processing Systems (NeurIPS)},

year = {2019}

}